Kafka Cluster Requirements

| Component | Requirement |

|---|---|

| Spark Streaming for cluster streaming modes | Spark version 2.1 or later |

| Apache Kafka | Spark Streaming on YARN requires a Cloudera or Hortonworks

distribution of an Apache Kafka cluster version 0.10.0.0 or

later. Spark Streaming on Mesos requires Apache Kafka on Apache Mesos. |

sdc.env.sh or

sdcd.env.sh. Do not change this environment variable value.Kafka Consumer Maximum Batch Size

When using a Kafka Consumer origin in cluster mode, the Max Batch Size property is ignored. Instead, the effective batch size is <Batch Wait Time> x <Rate Limit Per Partition>.

For example, if Batch Wait Time is 60 seconds and Rate Limit Per Partition is 1000 messages/second, then the effective batch size from the Spark Streaming perspective is 60 x 1000 = 60000 messages/second. In this example, there is only one partition so only one cluster pipeline is spawned and the batch size for that pipeline is 60000.

If there are two partitions, then the effective batch size from the Spark Streaming perspective is 60 x 1000 x 2 = 120000 messages/second. By default, two cluster pipelines are created. If the number of messages in each partition are equal, then each pipeline receives 60000 messages in one batch. If, however, all 120000 messages are in a single partition, then the cluster pipeline processing that partition receives all 120000 messages.

To reduce the maximum batch size, either reduce the wait time or reduce the rate limit per partition. Similarly, to increase the maximum batch size, either increase the wait time or increase the rate limit per partition.

Configuring Cluster YARN Streaming for Kafka

Complete the following steps to configure a cluster pipeline to read from a Kafka cluster on YARN:

Enabling Security for Cluster YARN Streaming

When using a cluster pipeline to read from a Kafka cluster on YARN, you can configure the Kafka Consumer origin to connect securely through SSL/TLS, Kerberos, or both.

Enabling SSL/TLS

Perform the following steps to enable the Kafka Consumer origin in a cluster streaming pipeline on YARN to use SSL/TLS to connect to Kafka.

- To use SSL/TLS to connect, first make sure Kafka is configured for SSL/TLS as described in the Kafka documentation.

- On the General tab of the Kafka Consumer origin in the cluster pipeline, set the Stage Library property to Apache Kafka 0.10.0.0 or a later version.

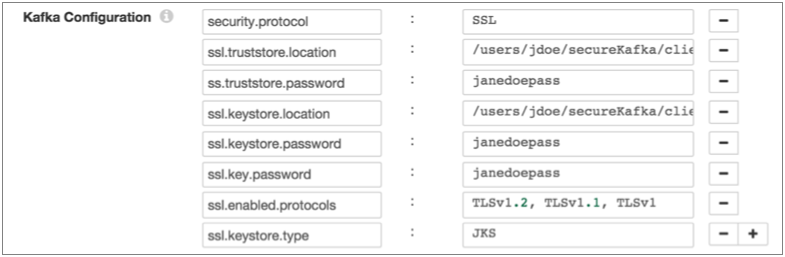

- On the Kafka tab, add the security.protocol Kafka configuration property and set it to SSL.

- Then add and configure the following SSL Kafka

properties:

- ssl.truststore.location

- ssl.truststore.password

When the Kafka broker requires client authentication - when the ssl.client.auth broker property is set to "required" - add and configure the following properties:- ssl.keystore.location

- ssl.keystore.password

- ssl.key.password

Some brokers might require adding the following properties as well:- ssl.enabled.protocols

- ssl.truststore.type

- ssl.keystore.type

For details about these properties, see the Kafka documentation.

- Store the SSL truststore and keystore files in the same location on the Data Collector machine and on each node in the YARN cluster.

For example, the following properties allow the stage to use SSL/TLS to connect to Kafka with client authentication:

Enabling Kerberos (SASL)

When you use Kerberos authentication, Data Collector uses the Kerberos principal and keytab to connect to Kafka.

Perform the following steps to enable the Kafka Consumer origin in a cluster streaming pipeline on YARN to use Kerberos to connect to Kafka:

- To use Kerberos, first make sure Kafka is configured for Kerberos as described in the Kafka documentation.

- Make sure that Kerberos authentication is enabled for Data Collector, as described in Kerberos Authentication.

- Add the Java Authentication and Authorization

Service (JAAS) configuration properties required for Kafka clients based on your

installation and authentication type:

- RPM, tarball, or Cloudera Manager installation without LDAP

authentication - If Data Collector does

not use LDAP authentication, create a separate JAAS configuration file

on the Data Collector

machine. Add the following

KafkaClientlogin section to the file:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="<keytab path>" principal="<principal name>/<host name>@<realm>"; };For example:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="/etc/security/keytabs/sdc.keytab" principal="sdc/sdc-01.streamsets.net@EXAMPLE.COM"; };Then modify the SDC_JAVA_OPTS environment variable to include the following option that defines the path to the JAAS configuration file:-Djava.security.auth.login.config=<JAAS config path>Modify environment variables using the method required by your installation type.

- RPM or tarball installation with LDAP

authentication - If LDAP authentication is enabled in an

RPM or tarball installation, add the properties to the JAAS

configuration file used by Data Collector - the

$SDC_CONF/ldap-login.conffile. Add the followingKafkaClientlogin section to the end of theldap-login.conffile:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="<keytab path>" principal="<principal name>/<host name>@<realm>"; };For example:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="/etc/security/keytabs/sdc.keytab" principal="sdc/sdc-01.streamsets.net@EXAMPLE.COM"; }; - Cloudera Manager installation with LDAP

authentication - If LDAP authentication is enabled in a

Cloudera Manager installation, enable the LDAP Config File Substitutions

(ldap.login.file.allow.substitutions) property for the StreamSets

service in Cloudera Manager.

If the Use Safety Valve to Edit LDAP Information (use.ldap.login.file) property is enabled and LDAP authentication is configured in the Data Collector Advanced Configuration Snippet (Safety Valve) for ldap-login.conf field, then add the JAAS configuration properties to the same ldap-login.conf safety valve.

If LDAP authentication is configured through the LDAP properties rather than the ldap-login.conf safety value, add the JAAS configuration properties to the Data Collector Advanced Configuration Snippet (Safety Valve) for generated-ldap-login-append.conf field.

Add the following

KafkaClientlogin section to the appropriate field as follows:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="_KEYTAB_PATH" principal="<principal name>/_HOST@<realm>"; };For example:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="_KEYTAB_PATH" principal="sdc/_HOST@EXAMPLE.COM"; };Cloudera Manager generates the appropriate keytab path and host name.

- RPM, tarball, or Cloudera Manager installation without LDAP

authentication - If Data Collector does

not use LDAP authentication, create a separate JAAS configuration file

on the Data Collector

machine. Add the following

- Store the JAAS configuration and Kafka keytab files in the same locations on the Data Collector machine and on each node in the YARN cluster.

- On the General tab of the Kafka Consumer origin in the cluster pipeline, set the Stage Library property to Apache Kafka 0.10.0.0 or a later version.

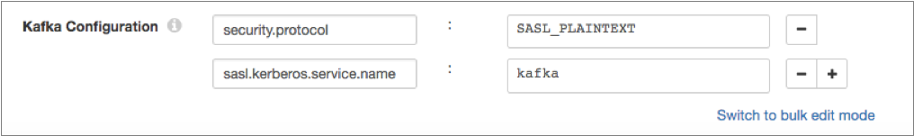

- On the Kafka tab, add the security.protocol Kafka configuration property, and set it to SASL_PLAINTEXT.

- Then, add the sasl.kerberos.service.name configuration property, and set it to kafka.

For example, the following Kafka properties enable connecting to Kafka with Kerberos:

Enabling SSL/TLS and Kerberos

You can enable the Kafka Consumer origin in a cluster streaming pipeline on YARN to use SSL/TLS and Kerberos to connect to Kafka.

To use SSL/TLS and Kerberos, combine the required steps to enable each and set the security.protocol property as follows:

- Make sure Kafka is configured to use SSL/TLS and Kerberos (SASL) as described in the following Kafka documentation:

- Make sure that Kerberos authentication is enabled for Data Collector, as described in Kerberos Authentication.

- Add the Java Authentication and Authorization

Service (JAAS) configuration properties required for Kafka clients based on your

installation and authentication type:

- RPM, tarball, or Cloudera Manager installation without LDAP

authentication - If Data Collector does

not use LDAP authentication, create a separate JAAS configuration file

on the Data Collector

machine. Add the following

KafkaClientlogin section to the file:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="<keytab path>" principal="<principal name>/<host name>@<realm>"; };For example:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="/etc/security/keytabs/sdc.keytab" principal="sdc/sdc-01.streamsets.net@EXAMPLE.COM"; };Then modify the SDC_JAVA_OPTS environment variable to include the following option that defines the path to the JAAS configuration file:-Djava.security.auth.login.config=<JAAS config path>Modify environment variables using the method required by your installation type.

- RPM or tarball installation with LDAP

authentication - If LDAP authentication is enabled in an

RPM or tarball installation, add the properties to the JAAS

configuration file used by Data Collector - the

$SDC_CONF/ldap-login.conffile. Add the followingKafkaClientlogin section to the end of theldap-login.conffile:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="<keytab path>" principal="<principal name>/<host name>@<realm>"; };For example:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="/etc/security/keytabs/sdc.keytab" principal="sdc/sdc-01.streamsets.net@EXAMPLE.COM"; }; - Cloudera Manager installation with LDAP

authentication - If LDAP authentication is enabled in a

Cloudera Manager installation, enable the LDAP Config File Substitutions

(ldap.login.file.allow.substitutions) property for the StreamSets

service in Cloudera Manager.

If the Use Safety Valve to Edit LDAP Information (use.ldap.login.file) property is enabled and LDAP authentication is configured in the Data Collector Advanced Configuration Snippet (Safety Valve) for ldap-login.conf field, then add the JAAS configuration properties to the same ldap-login.conf safety valve.

If LDAP authentication is configured through the LDAP properties rather than the ldap-login.conf safety value, add the JAAS configuration properties to the Data Collector Advanced Configuration Snippet (Safety Valve) for generated-ldap-login-append.conf field.

Add the following

KafkaClientlogin section to the appropriate field as follows:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="_KEYTAB_PATH" principal="<principal name>/_HOST@<realm>"; };For example:KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="_KEYTAB_PATH" principal="sdc/_HOST@EXAMPLE.COM"; };Cloudera Manager generates the appropriate keytab path and host name.

- RPM, tarball, or Cloudera Manager installation without LDAP

authentication - If Data Collector does

not use LDAP authentication, create a separate JAAS configuration file

on the Data Collector

machine. Add the following

- Store the JAAS configuration and Kafka keytab files in the same locations on the Data Collector machine and on each node in the YARN cluster.

- On the General tab of the Kafka Consumer origin in the cluster pipeline, set the Stage Library property to Apache Kafka 0.10.0.0 or a later version.

- On the Kafka tab, add the security.protocol property and set it to SASL_SSL.

- Then, add the sasl.kerberos.service.name configuration property, and set it to kafka.

- Then add and configure the following SSL Kafka

properties:

- ssl.truststore.location

- ssl.truststore.password

When the Kafka broker requires client authentication - when the ssl.client.auth broker property is set to "required" - add and configure the following properties:- ssl.keystore.location

- ssl.keystore.password

- ssl.key.password

Some brokers might require adding the following properties as well:- ssl.enabled.protocols

- ssl.truststore.type

- ssl.keystore.type

For details about these properties, see the Kafka documentation.

- Store the SSL truststore and keystore files in the same location on the Data Collector machine and on each node in the YARN cluster.

Configuring Cluster Mesos Streaming for Kafka

Complete the following steps to configure a cluster pipeline to read from a Kafka cluster on Mesos: